Too much runtime glue

Prompt logic, tool definitions, model settings, network boundaries, and worker orchestration end up spread across too many systems.

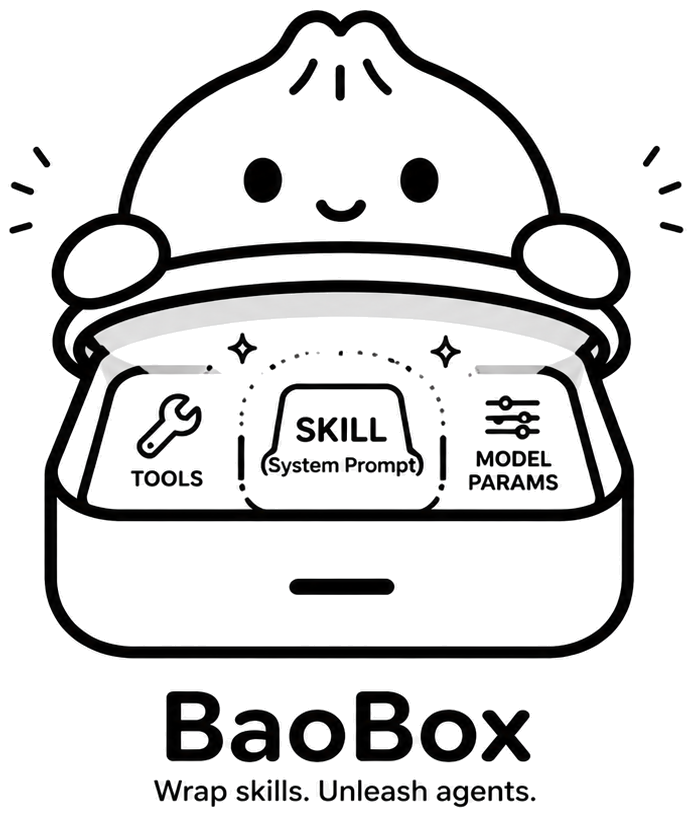

BaoBox is the runtime for production-ready AI skills.

Define a skill as prompt, tools, and model params. BaoBox turns that into a stateless agent API endpoint without Docker, servers, networking, orchestration, eval infra, or observability work.

Pre-launch now. We are opening early access with a small group of design partners and early teams.

name: support-agent

prompt: |

You are Acme's support triage agent.

tools:

- searchTickets

- createIncident

model:

provider: openai

name: gpt-4.1-mini

temperature: 0.2{

"output": "Issue routed to infra-oncall",

"steps": 4,

"eval_score": 0.94

}Problem

Teams start with a prompt, then inherit everything around it: tool wiring, endpoint hosting, logs, eval loops, retry logic, scaling, auth boundaries, and production debugging.

Prompt logic, tool definitions, model settings, network boundaries, and worker orchestration end up spread across too many systems.

You ship hosting, queues, containers, and logging pipelines before the first skill proves it should exist.

Without step-level traces and evals, every agent bug turns into forensic work across prompts, tools, and model behaviour.

Solution

BaoBox treats skills as the deployable unit. You package the system prompt, attach tools, set model parameters, and BaoBox handles the rest of the runtime surface.

How it works

Define the skill with system prompt, tools, model selection, and runtime parameters.

BaoBox turns the skill definition into a production-ready API endpoint with no server layer to manage.

Call the endpoint from apps, workflows, back office tools, or customer-facing products.

Inspect traces, step-level evals, tool execution, and response quality without bolting on another stack.

Differentiators

Ship an endpoint fast enough to validate value before infra grows around it.

Keep idle skills cheap, then wake them up when workloads actually arrive.

Closed-source runtime boundaries lower the operational and compliance burden.

Swap models and providers without rewriting the platform around each skill.

Trace every run, inspect every step, and tighten behaviour with real evidence.

Use cases

Ops, support, revops, legal, and finance helpers behind authenticated APIs.

Classification, extraction, summarisation, and routing in production pipelines.

Trigger tools, evaluate outputs, and hand results back into existing systems.

Wrap retrieval, triage, ticketing, and escalation logic behind a single endpoint.

Run brand-safe generation workflows with explicit tool and model controls.

Expose secure skill APIs to apps that need action-taking, not chat demos.